Heads Collapse, Features Stay:

Why Replay Needs Big Buffers

*Equal Contribution

Continual Learning & Experience Replay

Continual Learning

Train on sequence of tasks without forgetting

Learn new tasks sequentially

Experience Replay

Store samples from past tasks in buffer

Cost: Scales with buffer size

Two Levels of Forgetting

Deep Forgetting

(FROZEN)

Classifier

Shallow Forgetting

Head

Networks retain more information in representations than in predictions

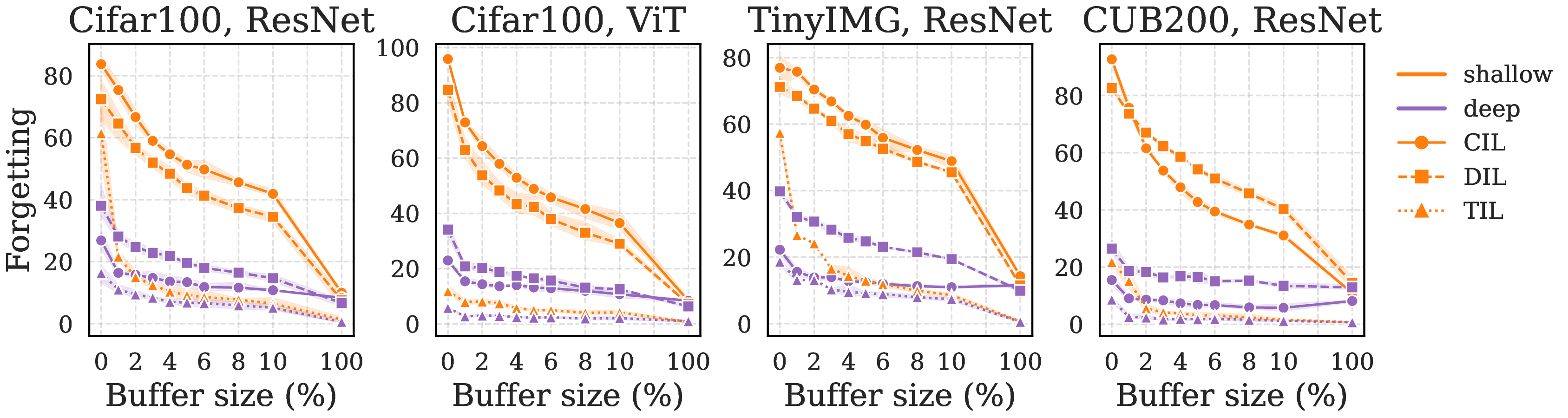

The Replay Efficiency Gap

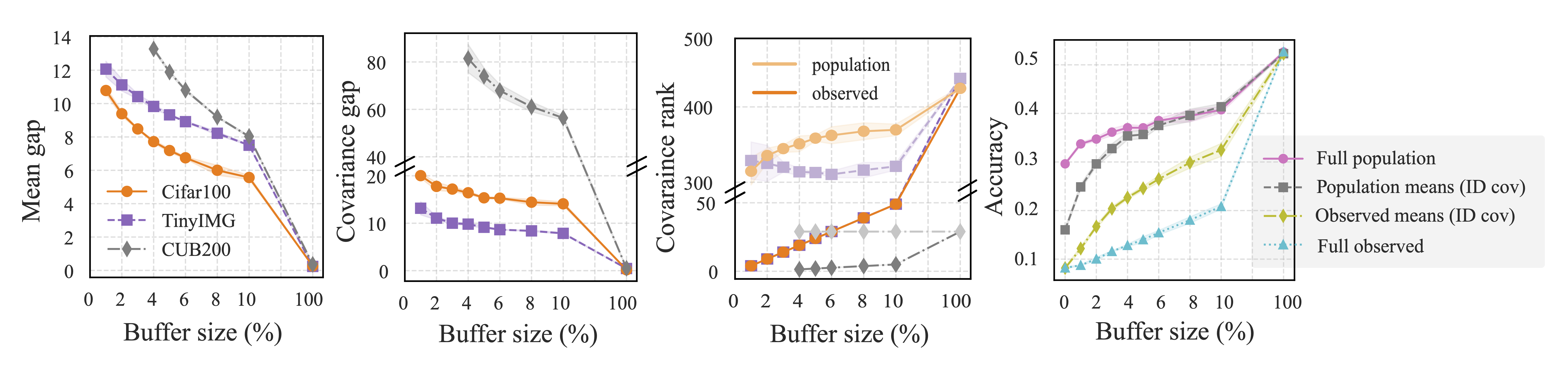

Forgetting decays at different rates in feature space vs. classifier head across buffer sizes

Analytical Framework: Neural Collapse

- Neural Collapse (NC): Geometric structures in terminal phase of training

- NC1: Within-class variance → 0

- NC2: Class means form simplex ETF

- NC3: Classifier weights align with class means: \( W_h^\top \propto \tilde{U} \)

Our contribution: First to extend NC formulation to continual learning

(all 3 setups: DIL, TIL, CIL)

Focus: Linear separability → characterize class mean & covariance

Neural Collapse emergence:

● Class 1 ● Class 2 ● Class 3

Animation: Features collapse from chaos to simplex ETF (Papyan et al., 2020)

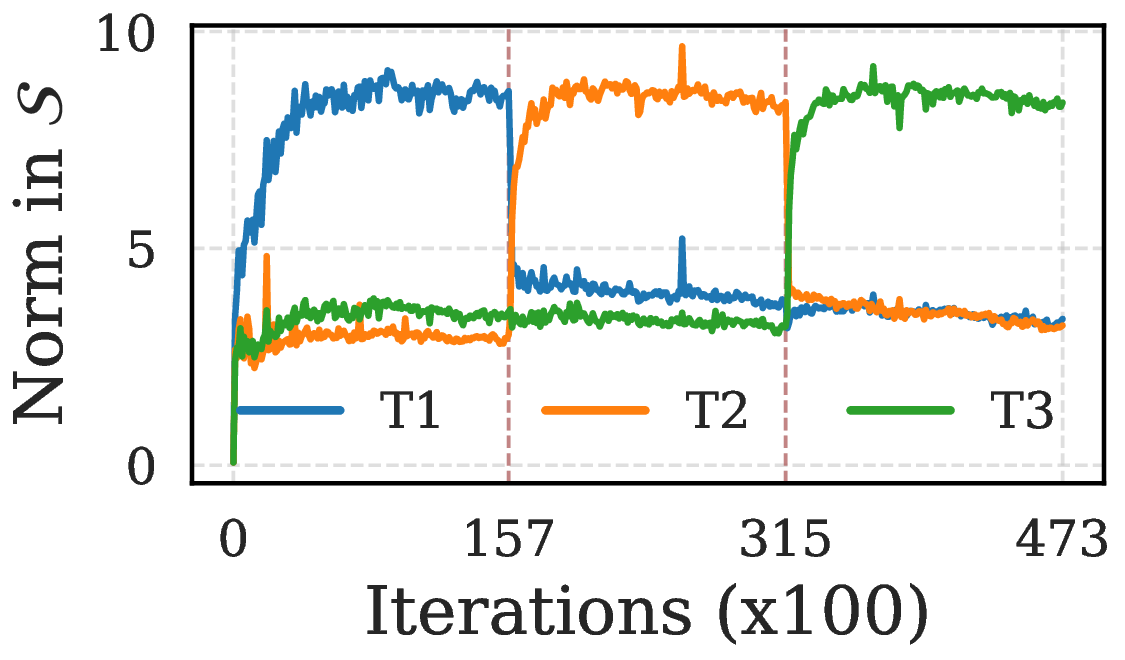

Cornerstone: Subspace Stabilization

Once NC3 emerges, gradient updates are confined to the active subspace \(S\)

Key Insight:

- After NC3, \(\text{span}(W_h) = S\)

- Loss gradients only affect features in \(S\)

- Components in \(S^\perp\) are frozen (or decay with weight decay)

This is the foundation for understanding what happens to old data representations

Connection to Out-of-Distribution Detection

Our Hypothesis

Forgotten samples behave as OOD data

- OOD features orthogonal to active subspace \(S\)

- Without replay: past tasks drift to \(S^\perp\), decay exponentially

Related work:

Ammar et al. (2024): NC5 property

Haas et al. (2023): L2 regularization & OOD

Kang et al. (2024): OOD collapse to origin

Past-task features project to zero in \(S\)

Feature Space Distribution with Replay

Buffer-OOD Mixture Model: Features interpolate between two extremes

Consequences for Old Data

NC emerges consistently across tasks with replay

Without Replay

Old task representations drift into \(S^\perp\)

Exponential decay with weight decay

With Replay

Representations anchored in \(S\)

Buffer provides foothold in active subspace

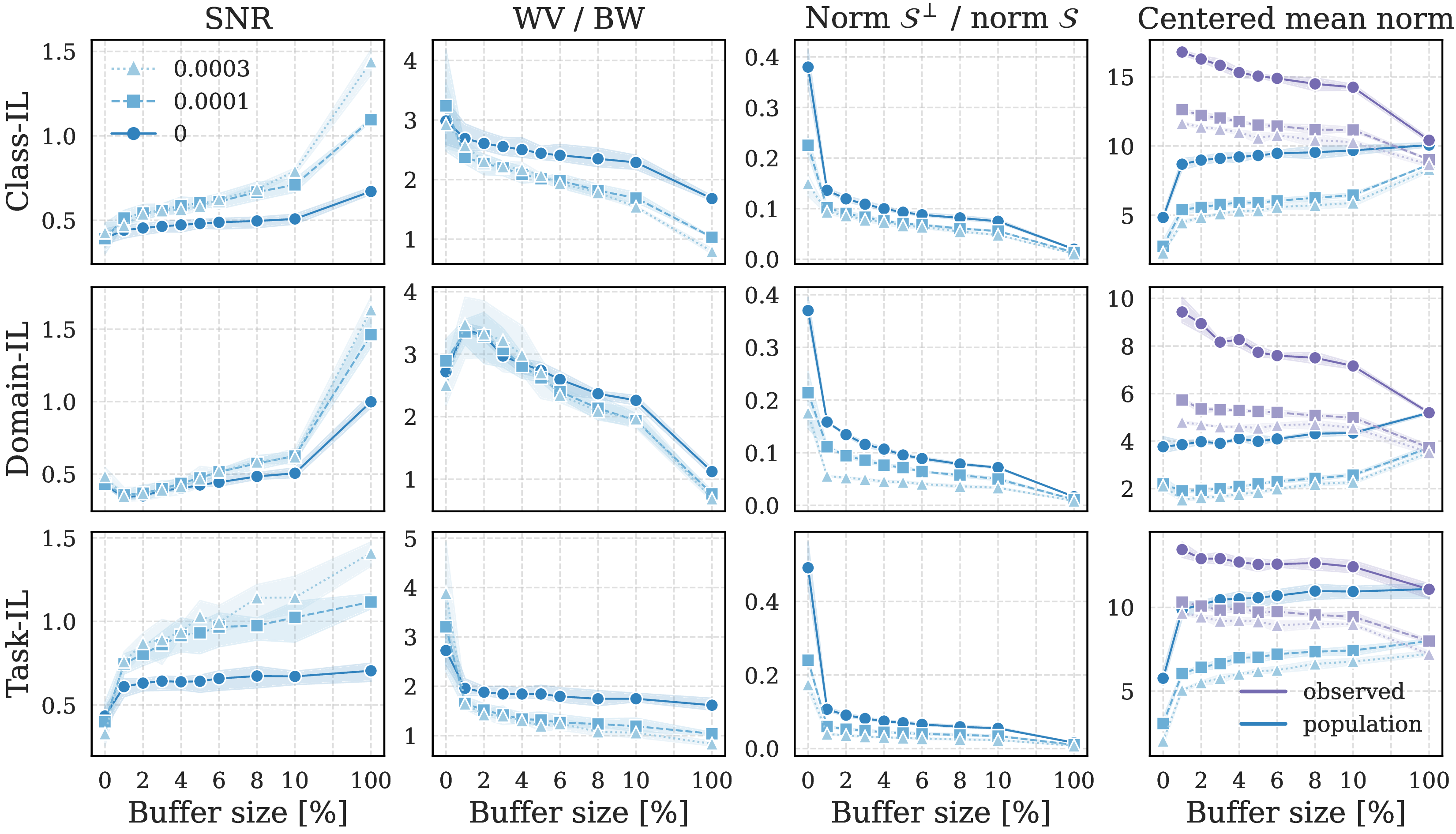

Why Shallow Forgetting Persists

If features are separable, why do classifiers fail?

- Strong Collapse: Small buffers induce rank-deficient covariances

- Under-determined Classifier: Multiple "buffer-optimal" boundaries fit stored samples perfectly

- Statistical Gap: Buffer estimates deviate from population statistics

Animation: Features remain separable, but classifier boundaries misalign with small buffers

Deconstructing the Statistical Gap

Covariance Deficiency

Buffer covariance \(\hat{\Sigma}\) is rank-deficient, blind to variance in \(S^\perp\)

Rank gap persists until buffer ≈ 100%

Mean Norm Inflation

Buffer means exhibit inflated norms due to repulsive forces

\(\|\hat{\mu}_c\| > \|\mu_c\|\) for small buffers

Gap between population and buffer statistics persists across buffer sizes

Conclusions & Implications

- Low buffers already mitigate deep forgetting — No need for large buffers to maintain feature-space geometry

- The Replay Asymmetry: Statistical gap between buffer and population causes shallow forgetting

- Future Direction: Explicitly correcting statistical artifacts could unlock robust CL with minimal replay

Thank you!